Crawling and Indexing 101: Make Your Website Search Friendly

Your website is full of valuable information that you want people to be able to find easily, but there's only so much search engines can see when it comes to your site—unless you make it easy for them! Crawling and indexing are two core functions of search engine optimization (SEO), and learning how to do them properly will help you optimize your site for better rankings, higher traffic, and more conversions.

This article will teach you how to crawl and index your website effectively so you can bring in more business through organic search!

What Exactly Is Crawling and Indexing?

Crawling and indexing are the foundation of your technical SEO efforts.

If your website isn't crawlable, search engines won't be able to find and index your content.

This can make it difficult for potential customers to find your site when they're searching for products or services like yours.

Basically, crawling is when search engine bots discover new and updated content on the web.

This content can be new pages, blog posts, images, or any other type of digital asset.

Once these pages are found, they're added to the search engine's index, which is a massive database of all the discovered content on the internet.

Auditing to Ensure That Your Pages Are Prime for Indexing and Crawling

1. Create an XML Sitemap

An XML sitemap is a file that has a list of all the pages on your website.

This makes it easy for search engines to find and crawl your site.

You can create a sitemap automatically or manually and make it available to Google.

In 2019, a Google representative stated that XML sitemaps are the “second most important source” for finding URLs.

An XML sitemap is like a map for your website that helps search bots understand and crawl your web pages.

Remember to keep your sitemap up-to-date when/if you add and remove web pages.

2. Internal Link to All Your Deep Pages

Every good website has multiple pages.

That's why those pages need to be organized so that search engines can easily find and crawl them.

Internal linking is key when you want a specific deep page on your website to be indexed by a search engine.

You can directly search engine crawlers to the page you want indexing by linking to it from other pages on your website.

Technical SEO services can help with this process.

If you have a flat architecture, you can usually prevent this issue from happening in the first place.

As your “deepest” page will only be 2-3 clicks from your homepage.

Either way, if you have a set of deep pages that you want indexed, nothing beats good old-fashioned internal linking.

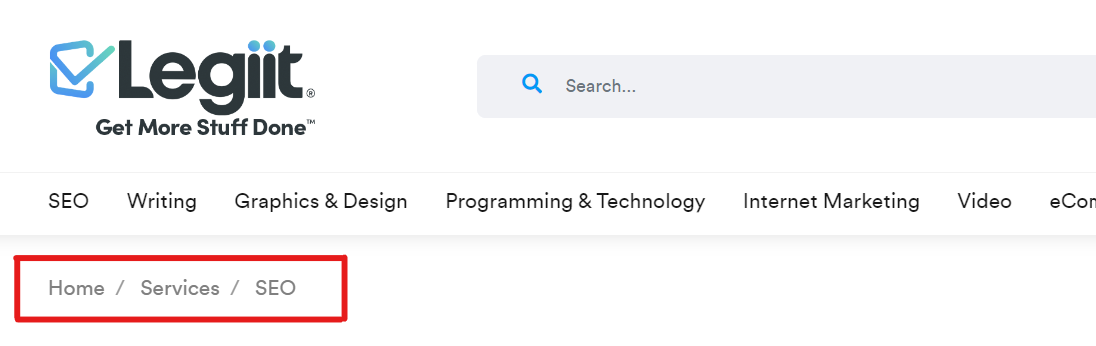

3. Add Breadcrumb Menus

If you want your website to be crawlable and indexable by search engines, you need to make sure your website is using breadcrumb menus.

Breadcrumb menus are a type of navigation that help search engines understand the structure of your website.

They typically look like this: Home > Services > SEO.

Breadcrumbs should:

- Be visible to users so they can easily navigate your web pages without having to use the Back button

- Have structured markup language that gives accurate context to search bots crawling your site.

4. Audit Your Redirects

A redirect is a way to send visitors and search engines to a different URL from the one they originally requested.

Redirects are important because:

- They can help keep your website's traffic flowing if you need to change your URL structure for any reason.

- They can help you control how search engines index your website.

- They can help ensure that users end up on the right page on your website when they enter in a URL that isn't quite right.

So, verify that all of your redirects are set up properly.

Redirect any loops (when a link takes you back to that same page), broken URLs, or improper redirects, which can cause issues when your site is being indexed.

6. Check the Mobile Responsiveness of Your Site

Mobile responsiveness is key for a website these days.

This means having a design that looks good on all screen sizes, as well as ensuring that your content is easy to read and navigate.

You also want to ensure your site loads quickly, as users are unlikely to wait more than a few seconds for a page to load.

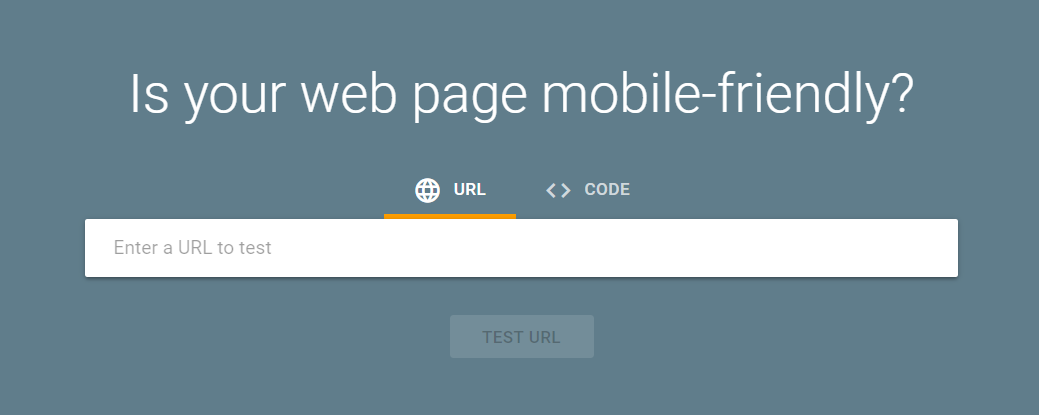

To check the mobile responsiveness of your site, you can use Google's Mobile-Friendly Test tool.

Simply enter your URL, and Google will give you a report on how well your site stacks up.

Conclusion

To sum up, crawling and indexing are both essential for making your website search engine friendly.

Crawling is the process whereby search engines discover new websites and index them in their databases.

Indexing, on the other hand, is the process of taking all the information gathered during crawling and storing it in an easily accessible format.

Without both of these processes, your website would not be visible to searchers.